|

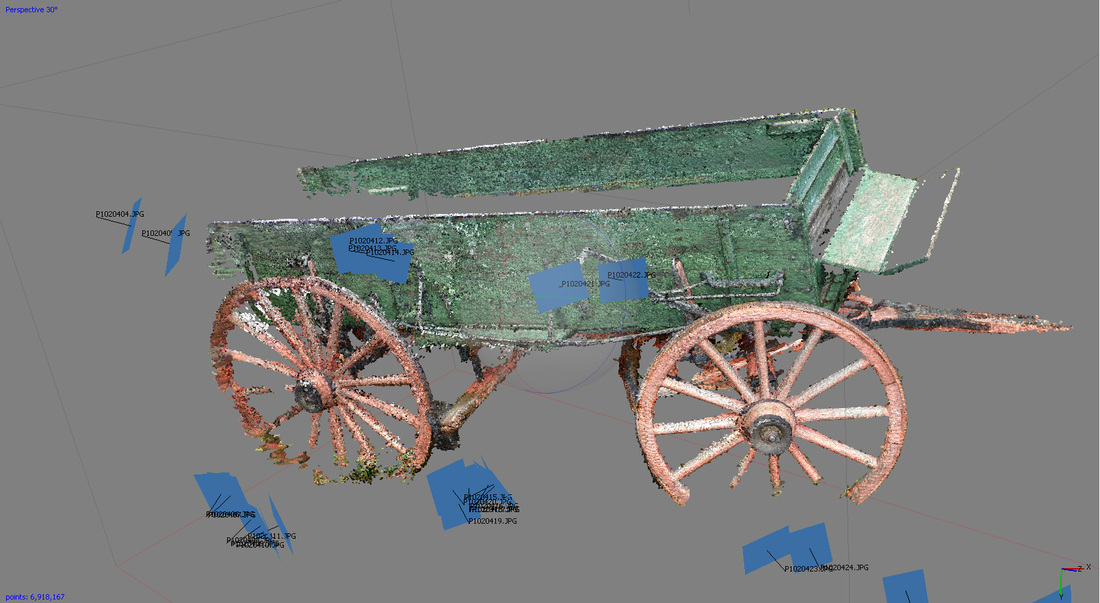

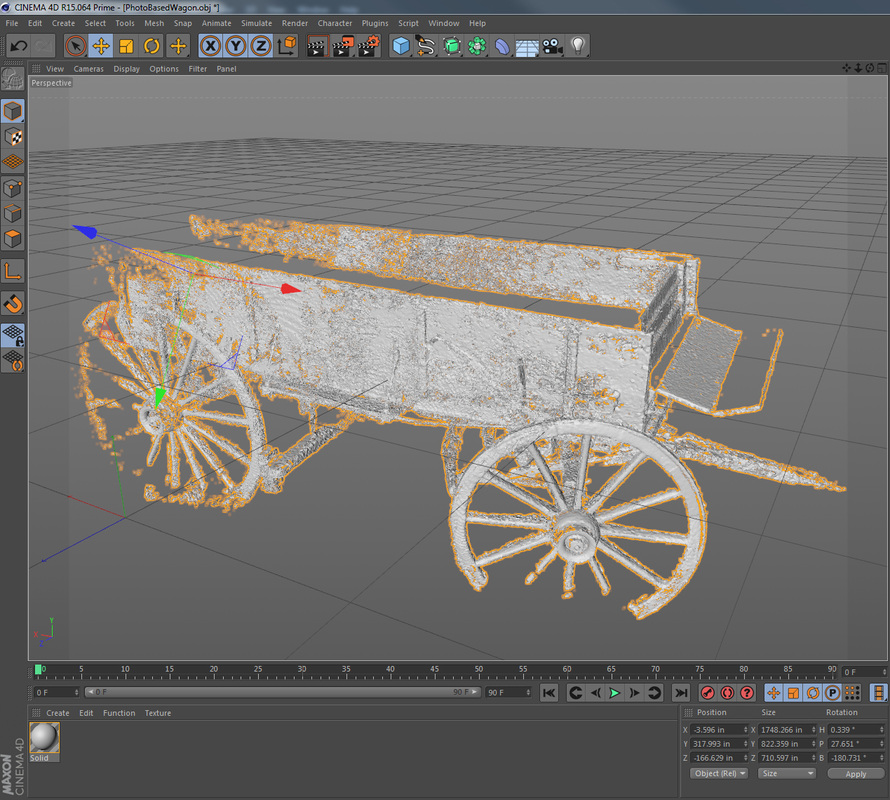

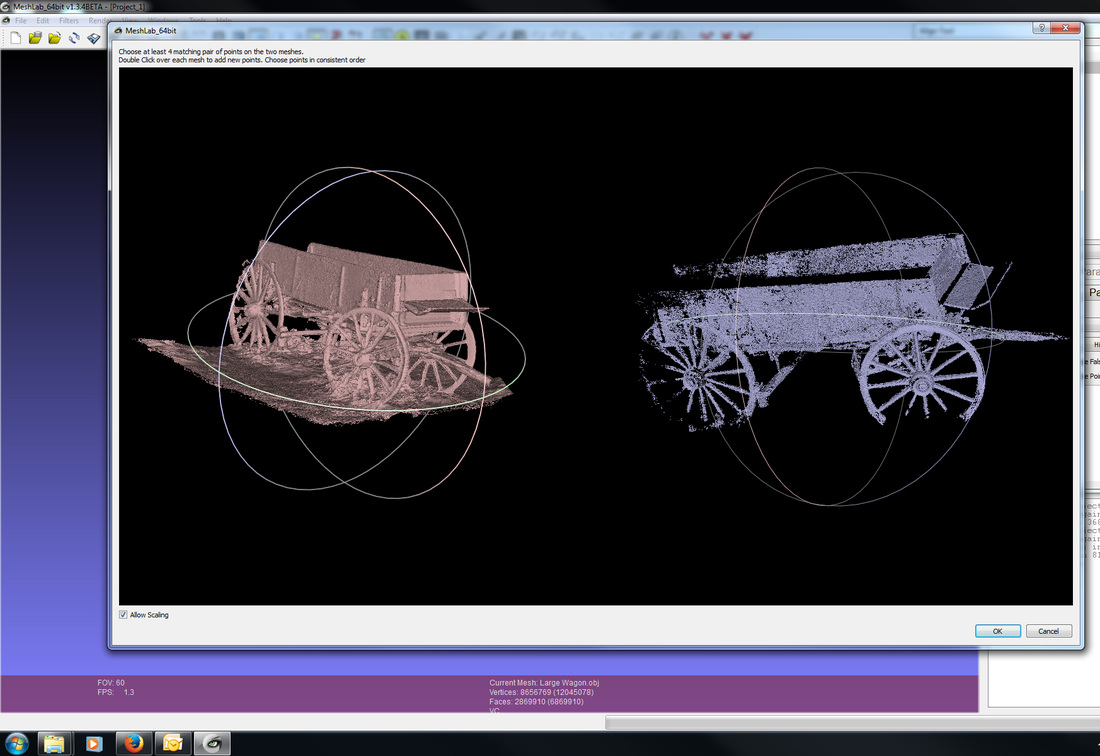

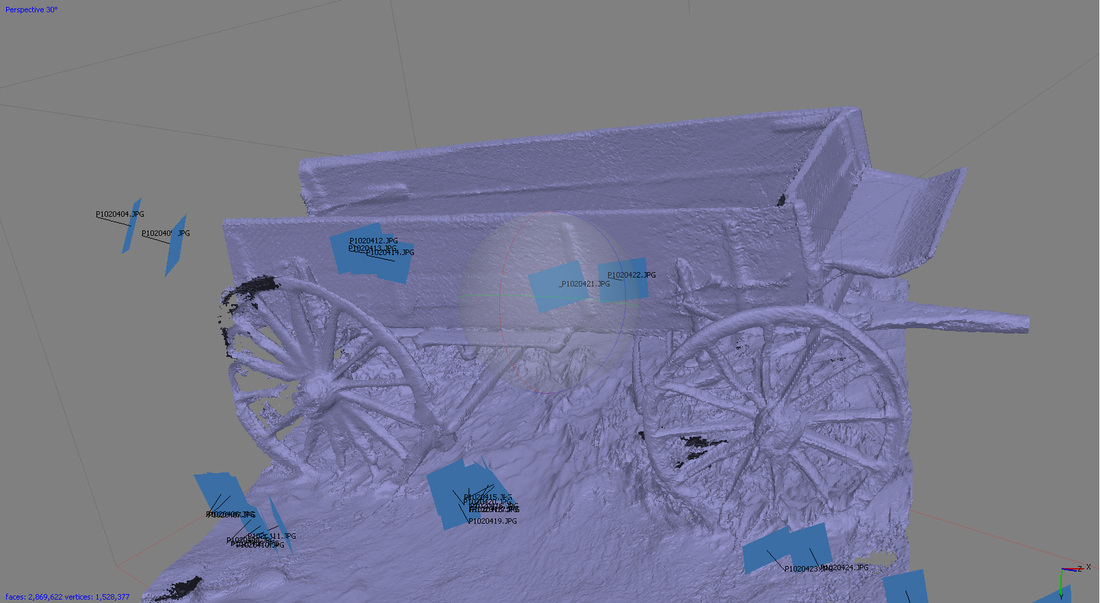

I bought the Kinect v2 sensor. And it has been a bit of a let down but probably not for the reasons you might think. Well, there are two main reasons; (1)one is that vendors have been confounded by it and slow to support it and (2)two because it still has the same lame color resolution. While I have no answers on how to speed up vendors to support it sooner, I have been able to attack the problem of getting higher resolution color images for my Kinect scans. And that is what I'd like to share with you. My first brush with the idea of affixing higher quality color imagery to Kinect scans came when I had the good fortune of connecting with the engineers over at Lynx labs. They were just a Kickstarter yet to be at the time and we talked at length about my fanaticism for depth mapping (and my many failed attempts at scanning for film-making) and their plans for give a Prime Sense sensor great software powers and making it an all-in-one appliance type solution. As their development progressed we discussed desired features quite a bit. As a film maker my demands can be summarized as needing great (and sophisticated) color/texture resolution and detail and needing a little bit of depth. Where as an engineering heavy group might need super accuracy in their 3D information and not need color at all. Lynx Labs deployed a Raster Alignment feature in the software system of their A-cam and it was simply brilliant. It was based loosely on a similar Raster Alignment tool (which I have had very little success with) that was available in Meshlab. The results of applying high fidelity imagery to a mid-quality 3D scan was fantastic. So how do I go about reproducing this result with my Kinect scans? Step One Gather Photo Reference Under Ideal Lighting An overcast day is great but selecting an object in shadow is equally valuable. The reason is that we are trying to capture a subject in ambient lighting so that we get a clean diffuse texture (without lighting perspective) that can be synthetically re-lit once it is on a 3D model. Step Two Step Three Kinect Scan Your Subject The Kinect can be a bit finicky and limited but you need to get your scan. The Kinect can sometimes scan successfully on an overcast day but don't count on it. Wait until dark or use a tent to knock out the sunlight. In this particular scan attempt it was sleeting on me (making me rather impatient and cold). The cold also makes your cables go rather ridged so things get a little more clumsy. Here also, in order to get the Kinect powered I brought a battery backup with me. I used the Kinect Fusion SDK demo to do the scanning and it has a bit of limitations. Warning: it is EXTREMELY easy to lose scanner alignment on the Kinect. Personally, I'd like to see a software package that uses a second Kinect to motion capture your skeleton to keep track of where you're holding the device in 3d space. Really anything here would be nice to enable you to NOT lose sync. Take your time. Just to give you an idea of how finicky this device, I kept losing sync and couldn't figure out why. I happen to notice that when I would exhale I would often lose sync. I tested the relationship with exhaling and found that when I did the heat of my breath (remember it was really cold outside) acted like a cloud of darkness to the scanner. So every time my breath wafted past the sensor it would go blind. Haha! Crazy finicky! Step Four Step Five Align Both Scans in Meshlab Meshlab is a free, open-source 3d software platform for processing scans. 1. Import both models. 2. Press the Align button in the top toolbar. 3. Select the Photo Scan derived model and select "Glue Mesh." 4. Select the Kinect Scan derived model and select "Point Based Glueing." 5. A point matching window will pop up. Check the box to "Allow Scaling". 6. Set four matching points on each model and click OK. 7. If the alignment is reasonably close then click the "Process" button in the Align Tool window. 8. Close the Align Tool window and then right click on your aligned Kinect scanned model. 9. A long menu of options will drop down, select "Freeze Current Matrix". A window will pop up. 10. Hit "Apply." Now export just your aligned Kinect scanned model. You're done here. Step Six Step Seven ConclusionThe alignment of your end texture will hinge entirely on your alignment between the two models in Meshlab so don't be afraid to do that over a couple of times until you are satisfied with the results. Try picking different matching points and then processing. See how low you can get that error rate. At the end of the day, you've got a well textured Kinect scan (please ignore the ugly inaccuracies of my test project wagon).

My test project was just that a poor looking Kinect scan meant to test whether I could bring higher quality images into alignment with my model. The wonderful part about using this approach (vs aligning each photo in meshlab, which I can not seem to get to work well for me) is that is saves you a lot of time by essentially aligning all the cameras at once. Basically, the camera alignment in Agisoft is used across the new model. While the Kinect is rather finicky device to scan with, it is capable of resolving scans in many situations which are inherently problematic for Photo Scanning (i.e.-flat surfaces with low texture information and others). Enjoy, and please share with me your successes or improvements on this workflow.

0 Comments

Leave a Reply. |

Permissions & CopyrightsPlease feel free to use our 3D scans in your commercial productions. Credit is always welcome but not required.

Archives

April 2018

Daniel

Staying busy dreaming of synthetic film making while working as a VFX artist and scratching out time to write novels and be a dad to three. Categories

All

|

|

Know and make known.

|